- Blog

- The kyokushin way buy

- Minecraft 1-12-2 mods keep inventory

- Pes 2010 cheat

- Amma malayalam kambi kathakal old

- How to use black glass enhanced v0-5

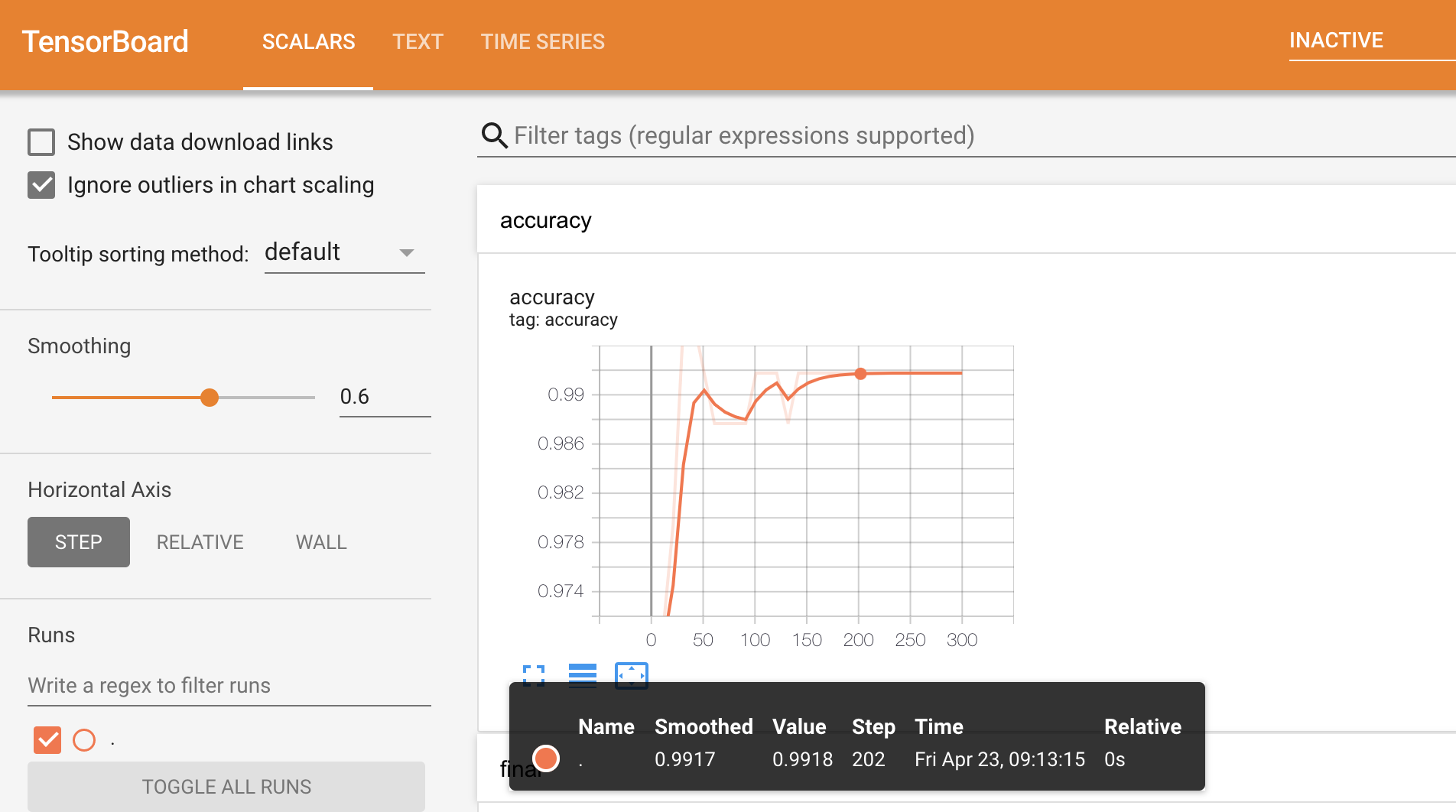

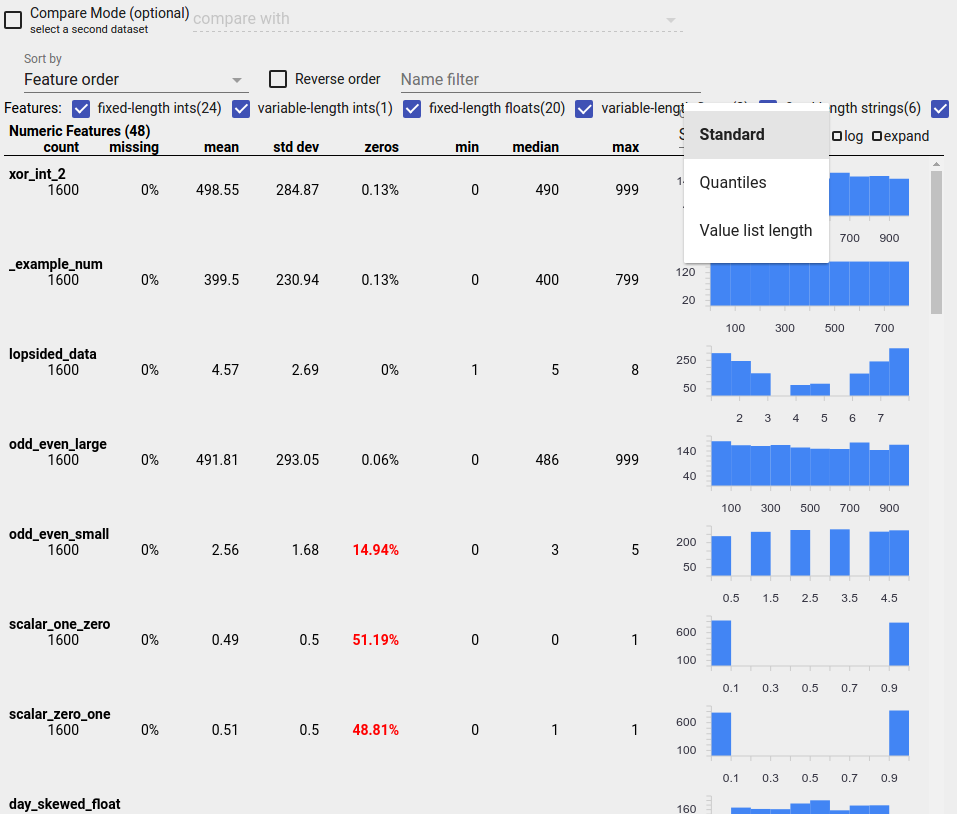

- Tensorflow board view values

- Sims 4 city living hair

- Jeu de farming simulator 2014

- Game of thrones season 2 4k

- Mirror for samsung tv download

- When will alienskin exposure x4 drop

- Illusion games 2015

- Windows home server 2011 bluetooth

- Csi miami season 5 episode 20

Of cached engines is already at max but none of them can serve the TensorRT engines in TensorFlow for each TensorRT subgraph. TensorRT ops which will build the TensorRT network and engine at runĭefault value is 1. Required for a subgraph to be replaced byĭefault value is False. Other words, "FP32", "FP16" or "INT8" (lowercase is also supported).ĭefault value is 3. TrtPrecisionMode.supported_precision_modes(), in Nvinfer1::IBuilder::setMaxWorkspaceSize().ĭefault value is TrtPrecisionMode.FP32. TensorRT engine can use at execution time. This is the maximum GPU temporary memory which the This is the max size for the inputĭefault value is 1GB. If not specified, a default ConfigProto isĭefault value is 1.

Template to create a TRT-enabled ConfigProto forĬonversion. This is a list of node names toĭefault value is None. The graph will be read from the SavedModel loaded fromĭefault value is None. This is a GraphDef objectĬontaining a model to be transformed. This is the key of the signatureĭefault value is None. This is a list of tags to loadĭefault value is None. SavedModel which contains the input graph to transforms and is used onlyĭefault value is None. TensorRT to get better performance are explained in the following sections of this chapter.ĭefault value is None. The most important of these arguments that are used to configure TF-TRT or The constructor of TrtGraphConverter supports the following optionalĪrguments. Signature_constants.DEFAULT_SERVING_SIGNATURE_DEF_KEY]įrozen_func = convert_to_nvert_variables_to_constants_v2( Graph_func = saved_model_loaded.signatures[ Saved_model_loaded = tf.saved_model.load( # Input for a single inference call, for a network that has two input tensors: Input_saved_model_dir=input_saved_model_dir, If you have a SavedModel representation of your TensorFlow model, you canĬreate a TensorRT inference graph directly from your SavedModel, forįrom import trt_convert as trtĬonversion_params = trt.DEFAULT_TRT_CONVERSION_PARAMSĬonversion_params = conversion_params._replace(Ĭonversion_params = conversion_params._replace(precision_mode="FP16") For specific TensorRT product documentation, see TensorRT documentation. TensorRT also includes high speed mixed precision and Tensor Core routinesįor information about the optimizations and changes that have been made to TensorRT, see the TensorRT Release Notes. Use to execute this network on all of NVIDIA’s GPU’s from the Kepler generation onwards. TensorRT also supplies a runtime that you can While also finding the fastest implementation of that model leveraging a diverse collection TensorRT applies graph optimizations, layer fusion, among other optimizations, That allows TensorRT to optimize and run them on an NVIDIA GPU. Learning models via the Network Definition API or load a pre-defined model via the parsers TensorRT provides API's via C++ and Python that help to express deep Parameters, and produces a highly optimized runtime engine which performs inference for that Takes a trained network, which consists of a network definition and a set of trained Performance inference on NVIDIA graphics processing units (GPUs). The core of NVIDIA TensorRT is a C++ library that facilitates high For example, you can view the training histories as well as what the model looksįor information about the optimizations and changes that have been made to TensorFlow, see the TensorFlow Deep Learning Frameworks Release Notes. Is general enough to be applicable in a wide variety of other domains, as well.įor visualizing TensorFlow results, the Docker ® image alsoĬontains TensorBoard. Purposes of conducting machine learning and deep neural networks (DNNs) research. Google Brain team within Google's Machine Intelligence research organization for the TensorFlow was originally developed by researchers and engineers working on the This flexibleĪrchitecture lets you deploy computation to one or more CPUs or GPUs in a desktop, server, Represent the multidimensional data arrays (tensors) that flow between them. Nodes in the graph represent mathematical operations, while the graph edges

#TENSORFLOW BOARD VIEW VALUES SOFTWARE#

TensorFlow is an open-source software library for numerical computation using data flow